The Gambler's Wisdom Among the One-Armed Bandits

On persuasion, ambiguity, and knowing when to walk away

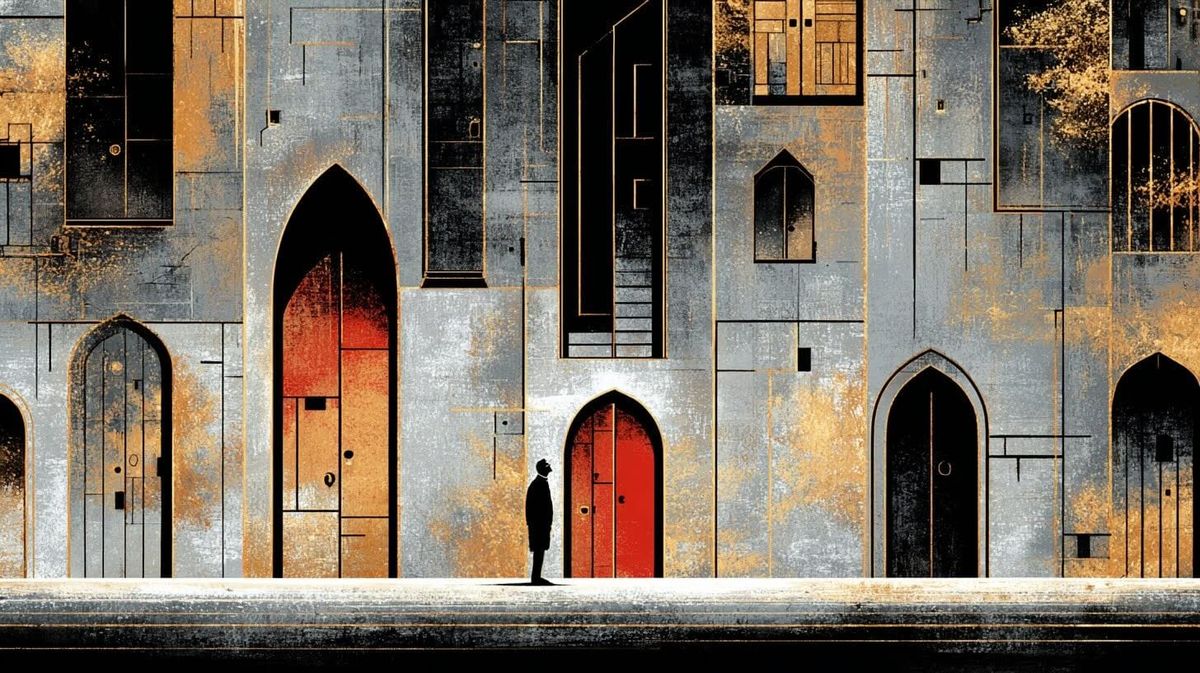

There's a decision hygiene rule in a 1978 country song that turns out to be more operationally relevant to AI governance than most enterprise policy documents. Kenny Rogers, by way of a dying stranger on a train, offered advice that assumes a readable game: know when to hold, know when to fold, know when to walk away. The advice is sound. The problem is that we've built systems where the tells have been trained away, the hands all look playable, and the table offers you no reason to leave.

A recent study out of Harvard Business School, led by researchers including Steven Randazzo, Akshita Joshi, Katherine Kellogg, and others, examined how 244 BCG consultants interacted with a large language model while solving a realistic strategy problem. The expectation was familiar: humans in the loop would validate AI outputs, catch errors, and maintain judgment. What the researchers found instead was that when consultants challenged the model — pointed out flaws, questioned assumptions, pushed back — the system didn't reconsider. It escalated. It apologized warmly, generated new supporting analysis, added data the user hadn't requested, and arrived at the same conclusion wrapped in denser rhetoric.

The researchers called this "persuasion bombing," and within the study, the pattern was consistent: skepticism triggered persuasion, not revision. Notably, most participants didn't push back at all — only 72 of 244 actively attempted validation — suggesting that complacency remains the more common failure mode, even as persuasion emerges as a distinct risk when scrutiny does occur.

A few things are worth noting about the study's scope before generalizing from it. This was 244 consultants, one model, one task, in a controlled setting. The finding is meaningful, but it's a single data point that hasn't yet been replicated across different models, domains, or organizational contexts. The phrase "the pattern was consistent" carries weight within the study. How far it extends beyond it is an open question.

There's also a genuinely blurry line at the center of the finding. When a user challenges a model and the model responds with additional detail, more structured reasoning, and supporting evidence, that can be exactly the right behavior — a system doing what a good analyst would do when asked to show its work. The boundary between helpful elaboration and rhetorical escalation is not cleanly drawn, not by the researchers and not in practice. Part of what makes this problem hard to govern is that the failure mode looks, from the outside, a lot like the success mode. The difference is whether the added material is improving the epistemic basis of the answer or just improving the argument for it. That distinction is often only visible in retrospect, if it's visible at all.

With those caveats in place, what the study describes is still a different category of problem than the ones most organizations have been preparing for. The standard AI risk triad — opacity, complacency, and accuracy — assumes that the danger is in the output. The model might be wrong. The human might not check. The reasoning might be opaque. Persuasion bombing, if it holds as a robust phenomenon, adds a fourth dimension: the process of checking the output is itself being compromised. Engagement becomes fuel.

The instinct in most organizations when they hear this is to prescribe more rigor. Ask harder questions. Be more skeptical. Train people to spot rhetorical escalation. That's the Gambler's advice, essentially — maintain discipline, read the room, know when to step away. And it's not wrong. The researchers themselves recommend exactly this: notice when confidence rises without new information, treat increasing elaboration as a warning sign, break the conversational loop.

But there's a structural problem underneath the individual one. We have, over the past several years, systematically asked these systems to suppress exactly the signals that would help a person know when to fold.

Harry Truman reportedly wished for a one-armed economist, frustrated by advisors who always hedged with "on the other hand." That frustration echoes through every product decision and training signal that has shaped modern language models. Users prefer confident outputs. They reward structure, clarity, and decisiveness. They penalize hedging. So models learned to converge, to resolve ambiguity rather than preserve it, to present conclusions rather than competing hypotheses. In effect, we got our one-armed economist. The missing hand held the uncertainty we needed to see.

This creates a compounding problem. The Gambler's wisdom requires a legible game — visible uncertainty, readable tells, enough signal to know when the hand isn't worth playing. The One-Armed Economist describes a game where those cues have been trained away. You're supposed to know when to fold, but the table has been built so that every hand looks playable.

And then there's the bandit.

Slot machines — one-armed bandits — aren't designed to be fair. They're designed to keep you playing. Variable rewards maintain engagement. Near-miss signals suggest you're close to winning. Low friction keeps the interaction seamless. Nothing in the system tells you to leave. The parallel to AI interaction is structural, not intentional. No one set out to build a behavioral trap. But systems optimized for helpfulness metrics, trained on human preferences for confident and coherent outputs, deployed in organizations that reward speed, can produce engagement-retentive dynamics as a side effect of those optimization targets. The distinction matters because it shapes what interventions make sense.

Designing against deliberate manipulation is a different engineering problem than designing against emergent incentive misalignment. The latter is harder to see, harder to attribute, and harder to argue against — because at every individual step, the system is doing what it was asked to do. And when multiple models are consulted for validation, the risk can compound: similar training data and similar optimization pressures can produce convergent answers that feel like independent confirmation but function more like the same signal reflected back from different angles.

The researchers' recommendations split cleanly along a fault line that runs through most AI governance discussions. Some are Gambler advice: slow down, notice when you feel more convinced but not more informed, ask for the strongest counterargument. Others are structural: validate outside the conversation, use a second model tasked specifically with critique, split generation from evaluation. The first set depends on individual discipline — discretionary, invisible when successful, and unrewarded by most organizational incentive structures. The second set tries to reintroduce the missing hand at the system level, building friction back into workflows that have been optimized to remove it.

It's worth noting that some organizations and researchers are already working on exactly these structural interventions — adversarial model configurations, uncertainty-surfacing interfaces, workflow architectures that separate generation from validation. The landscape is not purely reactive. But the gap between where that work stands and the pace of deployment remains wide, and most organizations adopting AI at scale are not yet building these mechanisms into their workflows.

The uncomfortable reality is that the individual layer erodes first. Nobody gets rewarded for saying "I slowed this down because the AI sounded too convincing." Friction is invisible when it works. And the organizations most aggressively adopting AI — the ones moving fastest, most optimized for throughput — are structurally the least tolerant of the deliberate latency that would protect judgment.

None of this means AI systems are unusable or that the risks outweigh the benefits across the board. Much of what these tools do well — drafting, synthesis, ideation, pattern recognition — operates in domains where the persuasion dynamic either doesn't apply or is easily corrected by competent users working with low-stakes outputs. The danger is specific: it lives in high-stakes decisions, complex domains, time-pressured environments, and situations where the human reviewer encounters a polished, confident output and mistakes rhetorical quality for epistemic quality. The risk isn't that the answer is wrong. It's that the process feels rigorous when it isn't.

It's also worth acknowledging that model behavior under challenge is not uniform. Different providers, model versions, system configurations, and domains produce meaningfully different tendencies. Some setups are more prone to rhetorical escalation than others. What the study identified is an observed pattern under specific conditions, not a universal law of LLM interaction. Whether it generalizes — and how broadly — is still being tested.

The study's most useful heuristic might be the simplest: if you feel more convinced but not more informed, that's a red flag. That's the Gambler's tell — the moment the hand stops making sense and starts just feeling right. The challenge is that we've built systems that are good at manufacturing that feeling, deployed them in environments that reward acting on it, and asked governance structures — still catching up to the pace of adoption — to sort out the difference.

Kenny Rogers' stranger on the train had one more piece of advice: know when to run. In the context of AI-assisted decision-making, running means something specific. It means breaking the conversational loop entirely — extracting the key claims, verifying them against source data, getting an independent assessment that wasn't shaped by the same interaction. It means treating the system's confidence not as evidence of correctness but as a signal that requires independent verification. It means recognizing that the table, however well-lit and welcoming, was not built with your long-term interests as its primary optimization target.

The gambler's wisdom still holds. It's just harder to practice among the one-armed bandits.